- Blog

- Metal slug bosses

- Adobe pdf printer driver for windows 7 64-bit

- Icap browser

- Hp laserjet 1018 driver mac elcaptain

- C5 corvette projector headlights install

- Webscraper follow links to another page

- Ni autoclicker

- Kombucha juices

- The inner game of chess

- Autodesk autocad lt 2016

- Openoffice pdf editing

- Whm cpanel

- Dj studio 2 apk free download

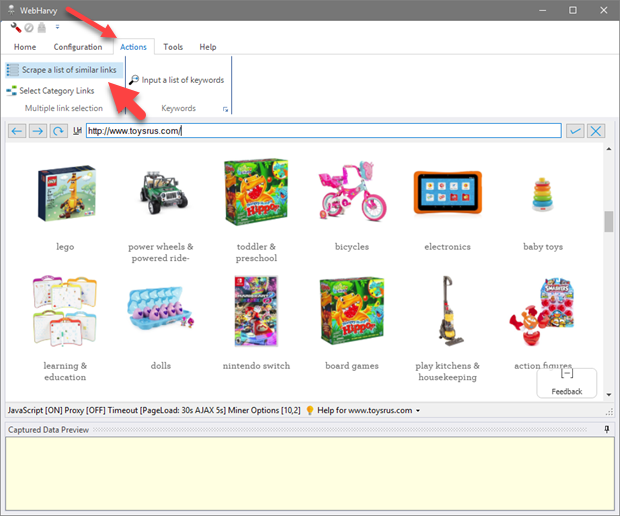

Now that we know what we’re building, let’s get to the fun part-putting it together. Here’s an example of it in action: $ go run main.go We’re going to be building a basic command line tool that takes an input of seed URLs, scrapes them, then prints the links it finds on those pages. Note that I didn’t say web crawler because our scraper will only be going one level deep (maybe I’ll cover crawling in another post). Building a Web ScraperĪs I mentioned in the introduction, we’ll be building a simple web scraper in Go. The tour will teach you everything you need to know to follow along. In order to keep this tutorial short, I won’t beĪccommodating those of you that haven’t yet finished the

using Go concurrency with multi-channel communication.using the /x/net/html to parse an HTML document.using the net/http package to fetch a web page.This post will walk you through the steps I tool to build a simple web scraper in Go. It was this that motivated me to open my IDE and try it myself. Parts like making HTTP requests and parsing HTML. The last task in the Go tour is to build a concurrent web crawler, but it faked the fun After many attempts, we came to the conclusion that Python just wasn’t suitable for some of our high throughput tasks, so we started experimenting with Go as a potential replacement.Īfter making it all the way through the Golang Interactive Tour, which I highly recommend doing so if you haven’t already, I wanted to build something real. In my previous job at Sendwithus, we’d been having trouble writing performant concurrent systems in Python.

- Blog

- Metal slug bosses

- Adobe pdf printer driver for windows 7 64-bit

- Icap browser

- Hp laserjet 1018 driver mac elcaptain

- C5 corvette projector headlights install

- Webscraper follow links to another page

- Ni autoclicker

- Kombucha juices

- The inner game of chess

- Autodesk autocad lt 2016

- Openoffice pdf editing

- Whm cpanel

- Dj studio 2 apk free download